ServiceNow AI Data Readiness: Why Your AI Agents Fail and How Workflow Data Fabric Fixes It

AI agents don’t fail because models are weak. They fail because the data underneath isn’t connected, contextualized, or controlled. This article explains how ServiceNow Workflow Data Fabric enables AI data readiness, what the Connect → Contextualize → Control framework means in practice, and where to start – whether you’re a CIO evaluating strategy or an architect planning implementation.

Table of Contents

- Why AI Data Readiness Is the #1 Blocker for Enterprise AI

- What Is ServiceNow Workflow Data Fabric?

- The Framework: Connect → Contextualize → Control

- From Workflows to Autonomous AI Agents: The Right Sequence

- ServiceNow AI Data Readiness in Practice: Industry Examples

- EU AI Act and Data Readiness: The Compliance Angle

- For Architects: Under the Hood of Workflow Data Fabric

- Where to Start: Three Data Readiness Questions Every Architect Should Answer

- Frequently Asked Questions

Why AI Data Readiness Is the #1 Blocker for Enterprise AI

There’s a pattern we see in almost every AI conversation with enterprise clients right now. The technology demo goes well. The pilot looks promising. Leadership gets excited. And then – somewhere between proof of concept and production – the project stalls.

Not because the model was wrong. Not because the use case didn’t make sense. But because the data underneath wasn’t ready.

A recent MIT study confirmed what practitioners have been seeing for years: the majority of enterprise AI pilots never make it to production – and the root cause isn’t model quality. It’s data fragmentation, inconsistency, and lack of governance. Gartner reinforces this, forecasting that by 2026, 60% of AI projects will be abandoned because they lack AI-ready data.

Most enterprises we work with have their data scattered across 5 to 15+ systems. Even organizations that invested heavily in a data warehouse or data lake typically find that only 60–70% of their enterprise data actually lives there. The rest is trapped in legacy systems, SharePoint folders, Confluence spaces, email threads – or in people’s heads.

The instinct is to solve this with more integration. Connect another API. Build another ETL pipeline. But integration alone doesn’t solve the ServiceNow AI data readiness problem. Connecting systems without giving that data context, governance, and semantic meaning just creates a faster path to bad decisions.

And it gets worse when you add AI. Layering intelligent agents across fragmented, ungoverned data doesn’t fix the mess – it exposes it. AI applied as a skin on top of broken data just amplifies the problem.

We see this pattern consistently in our own client engagements. When we helped modernize IT operations for Lithuanian Railways (LTG), the starting picture was textbook data fragmentation: a CMDB with incomplete and duplicate CIs, no unified ownership model, and limited visibility into service dependencies. Before any AI conversation was possible, the foundational data readiness work had to happen first.

Related reading: If your organization is still in the early stages of understanding how ServiceNow AI capabilities layer together – from Skills to Agents to Workflows – our Complete Guide to ServiceNow AI Skills, AI Agents & AI Workflows provides the foundational map.

What Is ServiceNow Workflow Data Fabric?

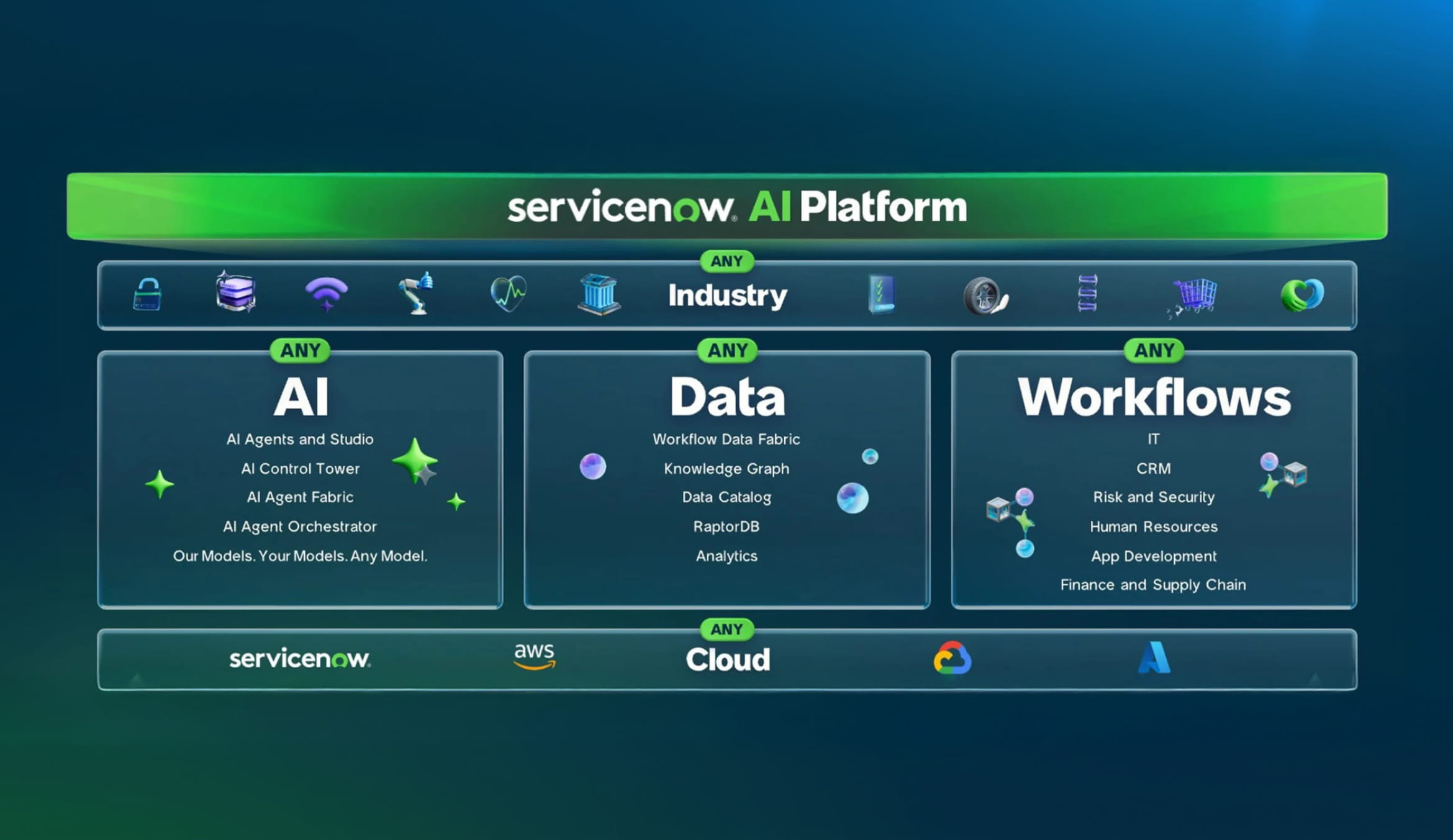

ServiceNow Workflow Data Fabric is the platform’s answer to enterprise data fragmentation. Announced in October 2024, it is an integrated data layer that connects all organizational data – regardless of location, format, or source system – to power AI agents and automated workflows from a single platform.

The core innovation: instead of extracting data from source systems and loading it into ServiceNow (traditional ETL), Workflow Data Fabric uses zero-copy connectors that query data where it lives. Your Snowflake data warehouse, Databricks analytics platform, SAP transactions, or Oracle databases stay in place while ServiceNow workflows access them in real time.

ServiceNow organizes Workflow Data Fabric around three foundational steps: Connect, Contextualize, and Control. Each step is positioned not as an optional enhancement but as a prerequisite for any organization moving toward agentic AI.

The Framework: Connect → Contextualize → Control

Step 1: Connect Your Data – Access Without Copying

The core philosophy of ServiceNow AI data readiness: leave data where it lives. Rather than replicating data into yet another silo, Workflow Data Fabric uses zero-copy connectors, stream connect (Apache Kafka integration), and external content connectors to orchestrate data in real time from a single platform.

This is vendor-agnostic and built on open frameworks including MCP and Apache. The goal is access to any data, any system, any format – without forcing a migration or reshaping data to fit a proprietary stack.

The distinction matters for data readiness. Most platforms that claim to “connect” data are actually copying it. Every copy is another silo, another place where data drifts out of sync, another cost center, another compliance risk.

Step 2: Contextualize Your Data – From Catalog to Context Engine

Connection without meaning is just plumbing. The second step is where ServiceNow’s approach to AI data readiness diverges from traditional data catalogs that simply index what exists.

The shift is toward a context engine – one that serves both humans and AI agents. This means attaching business definitions, metadata, relationships, and semantic meaning to every data element. An AI agent doesn’t just need to know that a table exists. It needs to understand what it’s looking at, why it matters, what it relates to, and what it’s allowed to do with it.

The technical backbone is the ServiceNow Knowledge Graph – a digital map connecting people, roles, processes, tasks, systems, and data assets in a web of relationships. This is what enables agents to move from being query tools to contextual decision-makers.

Here’s a practical example: the word “customer” means something different to sales, marketing, and finance. If humans in the same company can’t agree on what the term means, an AI agent operating across those departments has zero chance of getting it right without a semantic layer that resolves the ambiguity.

Related reading: The importance of connecting AI to governed platform data – including CMDB, workflows, and processes – is central to how ServiceNow AI Agents operate in practice. For how agents collaborate across these connected data sources, see our guide on AI Agent Fabric.

Step 3: Control Your Data – Governance as Enabler, Not Blocker

This is the most counterintuitive – and most important – element of ServiceNow AI data readiness: data governance is not the thing slowing your AI down. It’s the thing that lets it scale.

The moment AI agents start taking actions (not just making suggestions), governance becomes non-negotiable. You need lineage – where data comes from, where it goes. You need policy enforcement, certified data sources, and a full audit trail. Without these, you get the horror stories: agents deleting databases, making decisions on stale data, exposing confidential information to the wrong audience.

But manual governance is genuinely too slow for AI-speed operations. ServiceNow’s answer is automated, workflow-driven governance: guardrails that are enforced programmatically, allowing agents to act in confidence while maintaining full auditability.

The organizations that treat governance as a Phase 2 afterthought are the ones whose AI pilots never leave the sandbox. The ones that embed governance from Day 1 are the ones scaling.

Related reading: For the architectural perspective, see AI Security in ServiceNow: Governance Is No Longer Optional. For the practical governance framework, see Operating a ServiceNow Center of Excellence (CoE): Governance in Practice. And for the broader governance topology, Agentic AI & Governance Topology on ServiceNow maps the full picture.

From Workflows to Autonomous AI Agents: The Right Sequence

Once data readiness is in place through the Connect → Contextualize → Control framework, the progression toward autonomous AI becomes a matter of layering, not leaping.

ServiceNow has been a workflow engine for over 20 years. It ran over 80 billion workflows in the past 12 months alone. That foundation – deterministic, rule-based, proven at scale is the base layer for AI data readiness.

The maturity path looks like this:

- Workflow automation – deterministic processes that replace manual, error-prone steps

- Agent-assisted workflows – AI agents support humans within existing workflows (suggesting, validating, surfacing context)

- Autonomous agents – agents handle entire process steps independently, governed by deterministic guardrails

The critical differentiator: ServiceNow agents act deterministically on semantically understood data, not probabilistically on raw unstructured inputs. When an agent knows – via the Knowledge Graph and semantic layer – exactly what a “warehouse transfer” means, which rules apply, and which approvals are required, it can act autonomously with confidence. That’s a different proposition from an LLM guessing its way through an API.

Related reading: For a deeper look at the progression from automation to autonomy, see From Automation to Autonomy: How ServiceNow Is Redefining AI at Work and From Ticket Chaos to Autonomous IT. For the practical path from Now Assist Skills to full AI Agents, see From Now Assist Skill to AI Agent: Building the Foundation of Agentic AI.

ServiceNow AI Data Readiness in Practice: Industry Examples

These case studies from ServiceNow’s customer adoption team illustrate the data readiness pattern concretely and mirror patterns we see in our own delivery work.

Vendor Master Data Management – Global Food Distributor

A global food distributor needed a simpler way to manage vendor master data across SAP – a process that typically requires expert-level navigation of complex ERP screens. They built a ServiceNow workspace on top of SAP using zero-copy connectors and the semantic layer. The result: a production-ready portal in six weeks – versus an estimated 8 to 12 months using traditional approaches. The next phase: adding AI agents that check for duplicate vendors before creation.

Warehouse Transfer Orchestration – Pharmaceutical Company

A pharma company needed to automate cross-warehouse product transfers – a process with no built-in workflow in the underlying ERP, but with complex approval and business rule requirements. ServiceNow sits on top as the orchestration layer, with NLP-based interfaces allowing users to describe transfers in natural language. The key design principle: ServiceNow doesn’t recreate ERP business logic – it works on the API and business process layer, inheriting the underlying rules.

Campaign Stock Replenishment – Cosmetics Retailer

A retailer with hundreds of stores noticed consistent stock-outs during promotional campaigns. The root cause: disconnected data – sales analytics in Databricks, transactional data in SAP, with no unified view. ServiceNow connected to both via zero-copy connectors, unified the data in a single semantic model, and enabled predictive replenishment from nearby stores. Each campaign cycle now improves the prediction model.

The common thread: start with workflow automation on governed, semantically connected data — then layer agents on top. The semantic layer makes each subsequent use case faster and cheaper to deliver.

EU AI Act and Data Readiness: The Compliance Angle

For European organizations – especially in the DACH region – ServiceNow AI data readiness carries regulatory urgency. The EU AI Act’s enforcement is tightening through 2025 and beyond, and its requirements map directly to the Connect → Contextualize → Control framework: lineage and traceability, data quality certification, full auditability of AI-driven decisions, and purpose limitation controlling which data reaches which agents.

Organizations that build their AI strategy on the data readiness framework aren’t just scaling faster – they’re building EU AI Act compliance into the foundation rather than retrofitting it later.

Related reading: For how ServiceNow governance structures support regulatory readiness, see ServiceNow Governance Roles and Responsibilities Explained and ServiceNow Governance: How to Stay in Control as Your Platform Grows.

For Architects: Under the Hood of Workflow Data Fabric

Everything above frames the what and why. If you’re an IT architect evaluating ServiceNow AI data readiness for your enterprise, this section goes one layer deeper into the technical mechanisms and real-world trade-offs.

How ServiceNow Zero-Copy Connectors Actually Work

“Zero-copy” sounds elegant in a slide deck. Architecturally, it’s a federated data access pattern. ServiceNow zero-copy connectors create virtual table representations within the platform – metadata pointers that reference external data sources (SAP, Databricks, Snowflake, etc.) without replicating the underlying records. When a workflow or agent needs data, the platform issues a real-time API call to the source system, retrieves the relevant subset, and operates on it within the ServiceNow transaction context.

The practical implications architects must evaluate:

Latency. You’re trading storage duplication for runtime query latency. For high-throughput, sub-second use cases (e.g., real-time event correlation across 10,000+ CIs), zero-copy may introduce unacceptable delays depending on the source system’s API response time. The cosmetics retailer case is instructive – they used zero-copy for Databricks sales analytics, but the query scope was deliberately narrow: specific products, specific stores, specific campaign windows. Not a full dataset scan.

Source system availability. If your SAP system is down for maintenance, your ServiceNow workflow that depends on a zero-copy connector to SAP is also impacted. Architects need to design for graceful degradation – fallback behaviors, cached last-known-good states, and clear SLAs on source system uptime.

Data volume boundaries. Zero-copy works well for transactional lookups and contextual queries. It’s not designed to replace a data warehouse for bulk analytical workloads. The pattern is “reach out and get what you need” – not “bring everything over and run reports.”

We’ve seen similar integration decisions in our own work. When we migrated ITSM processes and CMDB data for a European bank, the critical question was the same: where does the system of record live, and how do we ensure data integrity without creating parallel silos?

ServiceNow Knowledge Graph and the Semantic Layer

The ServiceNow Knowledge Graph is not a separate database you deploy alongside your instance. It’s an abstraction layer built on top of the platform’s existing relational data model – the CMDB, task tables, user records, group structures – enhanced with semantic metadata that defines relationships, business meanings, and ontological hierarchies.

Think of it as CSDM (Common Service Data Model) taken further. Where CSDM gives you a standard taxonomy for services, applications, and infrastructure, the Knowledge Graph extends that into a broader semantic web: how a “customer” in CRM relates to a “requestor” in CSM, how a “material master” in SAP maps to a “catalog item” in ServiceNow, and how approval chains differ by entity type and geography.

The ontology layer is built through canonical data models. When you connect an external system via zero-copy, you don’t just map fields to fields. You map external entities to semantic concepts in the ServiceNow ontology. This is what allows an agent to reason across systems rather than querying two separate databases and hoping the join key matches.

The real challenge: building and maintaining these canonical models is human work. The technology provides the framework, but someone still has to get cross-functional stakeholders to agree on shared definitions. In our experience, this data modeling and stakeholder alignment phase is the single biggest variable in project timelines.

Our LTG Railways engagement illustrates what it takes: CSDM 4.0 alignment, CI ownership matrices, health rules, staleness checks, service mapping – all the “contextualize” work that makes the Knowledge Graph useful rather than theoretical.

Deterministic vs. Probabilistic Agents: The Honest Nuance

The framing – “our agents are deterministic, not probabilistic” – is ServiceNow’s strongest architectural argument, but it deserves precision.

What’s actually deterministic: the workflow execution. When an agent decides to trigger a warehouse transfer, the subsequent steps – API calls to SAP, approval routing, notification flows, audit logging – follow a governed, pre-defined path.

What’s still probabilistic: the reasoning layer. When a user says “I want to move product X from warehouse A to B,” the NLU interpretation and the agent’s decision about which workflow to invoke involve LLM-based probabilistic reasoning.

Why this still matters: the architecture constrains probabilistic reasoning within deterministic guardrails. The agent can reason freely about what to do, but the execution follows governed, auditable workflow paths. An LLM hallucination in the reasoning layer might lead to a wrong workflow being suggested – but it can’t silently execute an unapproved action, because the governance layer enforces checkpoints regardless.

This is genuinely different from architectures where an LLM has direct API access without workflow constraints. The ServiceNow model is closer to “AI recommends, platform enforces.”

For how governance enforcement works in practice, our post on Agentic AI & Governance Topology walks through the architecture.

What ServiceNow AI Data Readiness Doesn’t Solve

No framework is complete. Architects should be clear-eyed about the boundaries:

Data quality at the source. Zero-copy connectors and semantic layers don’t fix garbage data in your SAP master records. Source data quality remains a prerequisite – data readiness starts before ServiceNow enters the picture.

Heavily customized ERP data models. If your SAP instance has 15 years of Z-tables and custom transaction codes, the canonical model mapping effort grows non-linearly.

Real-time at scale. Zero-copy works for targeted, transactional queries. Continuous streaming analytics across millions of records still needs a dedicated data platform, with ServiceNow orchestrating actions on the results.

Organizational readiness. The hardest part of “contextualize” isn’t the technology – it’s getting cross-functional stakeholders to agree on shared definitions. The governance roles framework addresses this organizational layer.

From Our Delivery Practice: Where Data Readiness Shows Up First

In our experience, ServiceNow AI data readiness challenges surface most visibly in three areas:

CMDB health. If Discovery is incomplete and CI relationships are broken, every downstream process is built on a shaky foundation. This was the core of our LTG Railways work: health rules, automated remediation, and Data Manager policies before anything intelligent could be layered on top.

Cross-system process orchestration. Our FSM External Vendor Portal case involved building a React-based interface for external vendors interacting with distributed work orders – data flowing accurately between ServiceNow and the vendor ecosystem without creating stale state.

Identity and access governance. Our Clear Skye IGA implementation automated identity lifecycle management natively on ServiceNow – the control layer that must exist before autonomous agents operate safely.

The Entity Risk Intelligence Agent we built is the clearest example of the full Connect → Contextualize → Control → Act pattern: connecting 230,000+ global data sources, applying semantic understanding to risk signals, operating under governance guardrails, and taking autonomous compliance action.

Where to Start: Three Data Readiness Questions Every Architect Should Answer

If you’re evaluating your organization’s ServiceNow AI data readiness, bring these diagnostic questions to your next architecture review:

On Connect: Can you access all the data your AI use cases need – from ServiceNow, ERP, data lakes, unstructured sources – without creating new copies or silos? Do you know the latency characteristics and availability SLAs of each source system?

On Contextualize: Do you have a shared semantic model that defines what your business terms mean across departments? Is your CMDB aligned to CSDM with proper service mapping, or is it an inventory list nobody trusts?

On Control: If an AI agent made a decision in your environment today, could you explain where the data came from, who approved the source, and whether it was the most current version? Could you pass an EU AI Act audit on data lineage and decision traceability?

Where you find gaps is where you start. The good news: the case studies above show that meaningful progress can happen in weeks, not years, when the foundation work is scoped correctly.

Next step: If you want to assess your organization’s AI data readiness across data, governance, and automation maturity, our GenAI Assessment is designed to give you a clear starting point and a prioritized roadmap.

Frequently Asked Questions

What is ServiceNow AI data readiness?

ServiceNow AI data readiness is the process of ensuring enterprise data is connected to the platform via zero-copy connectors, contextualized with semantic meaning through the Knowledge Graph, and governed with automated policy-based controls – all before deploying AI agents or intelligent workflows. Without data readiness, AI pilots typically fail due to fragmented, inconsistent, or ungoverned data.

What is ServiceNow Workflow Data Fabric?

ServiceNow Workflow Data Fabric is an integrated data layer that connects enterprise data from any source, contextualizes it through a semantic layer and Knowledge Graph, and applies governance controls – enabling AI agents and workflows to access trusted, real-time data without duplicating or moving it from systems like SAP, Databricks, or Snowflake.

How do ServiceNow zero-copy connectors work?

Zero-copy connectors create virtual table representations that reference external data sources without replicating records. When a workflow or agent needs data, the platform issues a real-time API call to the source system, retrieves the relevant subset, and operates on it within the ServiceNow transaction context. This eliminates data duplication and ensures data freshness.

What is the ServiceNow Knowledge Graph?

The ServiceNow Knowledge Graph is a semantic abstraction layer built on top of the platform’s relational data model. It extends CSDM by defining relationships, business meanings, and ontological hierarchies across people, roles, processes, tasks, systems, and data. It enables AI agents to understand context and reason across connected systems.

Why do most enterprise AI pilots fail?

Research from MIT and Gartner shows that the majority of enterprise AI pilots fail to reach production because of data quality, fragmentation, and lack of governance – not AI model quality. Organizations solving the data readiness problem first are the ones successfully scaling AI in production.

How does ServiceNow data readiness relate to EU AI Act compliance?

The Connect → Contextualize → Control framework maps directly to EU AI Act requirements: data lineage and traceability, data quality certification, auditability of AI-driven decisions, and purpose limitation. Organizations building on this framework embed compliance into the foundation.

The Bottom Line

The market is flooded with AI capability announcements. New skills, new agents, new models every quarter. But the organizations that are actually scaling AI in production – not just running demos – are the ones that solved the data readiness problem first.

ServiceNow AI data readiness isn’t glamorous. It doesn’t make for exciting keynote slides. But it’s the difference between an AI pilot that impresses a steering committee and an AI platform that transforms how a business operates.

Connect your data without copying it. Give it meaning through a semantic layer. Govern it with automated, workflow-driven controls. Then – and only then – let the agents act.

That’s data readiness. Everything else is a demo.

Kostya Bazanov, Managing Director, Apr 06, 2026

Eager to take the next step? Contact us today!

Latest Articles

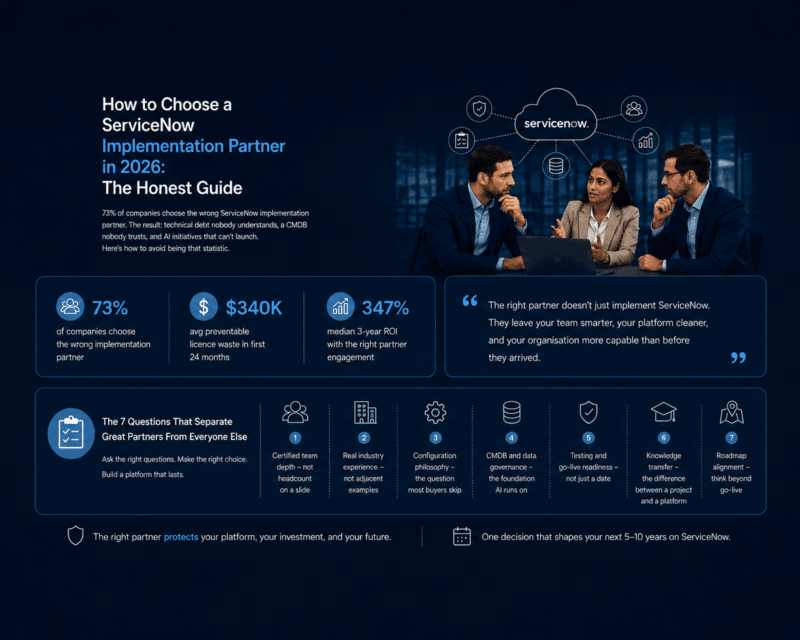

How to Choose a ServiceNow Implementation Partner in 2026

73% of companies choose the wrong ServiceNow implementation partner. The result: technical debt nobody understands, a CMDB nobody trusts, and AI initiatives that can't launch. Here's how to avoid being that statistic.

read more

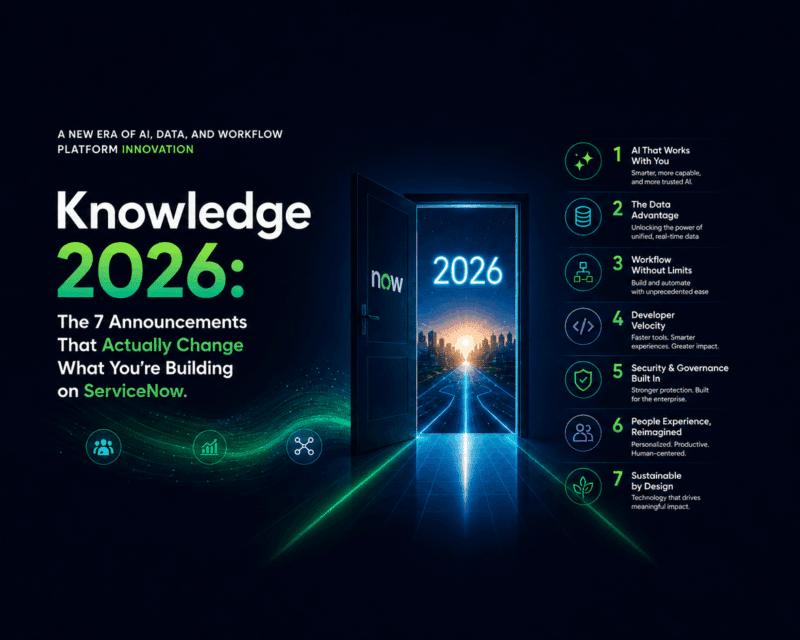

Knowledge 2026: The 7 Announcements That Actually Change What You’re Building on ServiceNow

25,000 people. Three days in Las Vegas. One signal that’s impossible to ignore: the era of AI that advises but stops short of execution is over. Here’s what actually matters — and what you need to do about it now.

read more

Only 11% of AI Agent Projects Reach Production — Here’s Why

Six months ago, your company likely launched an AI agent pilot. The demo worked. The vision was clear. Leadership was aligned. And yet today, it is still in testing. This is not an exception. It is the norm.

read more